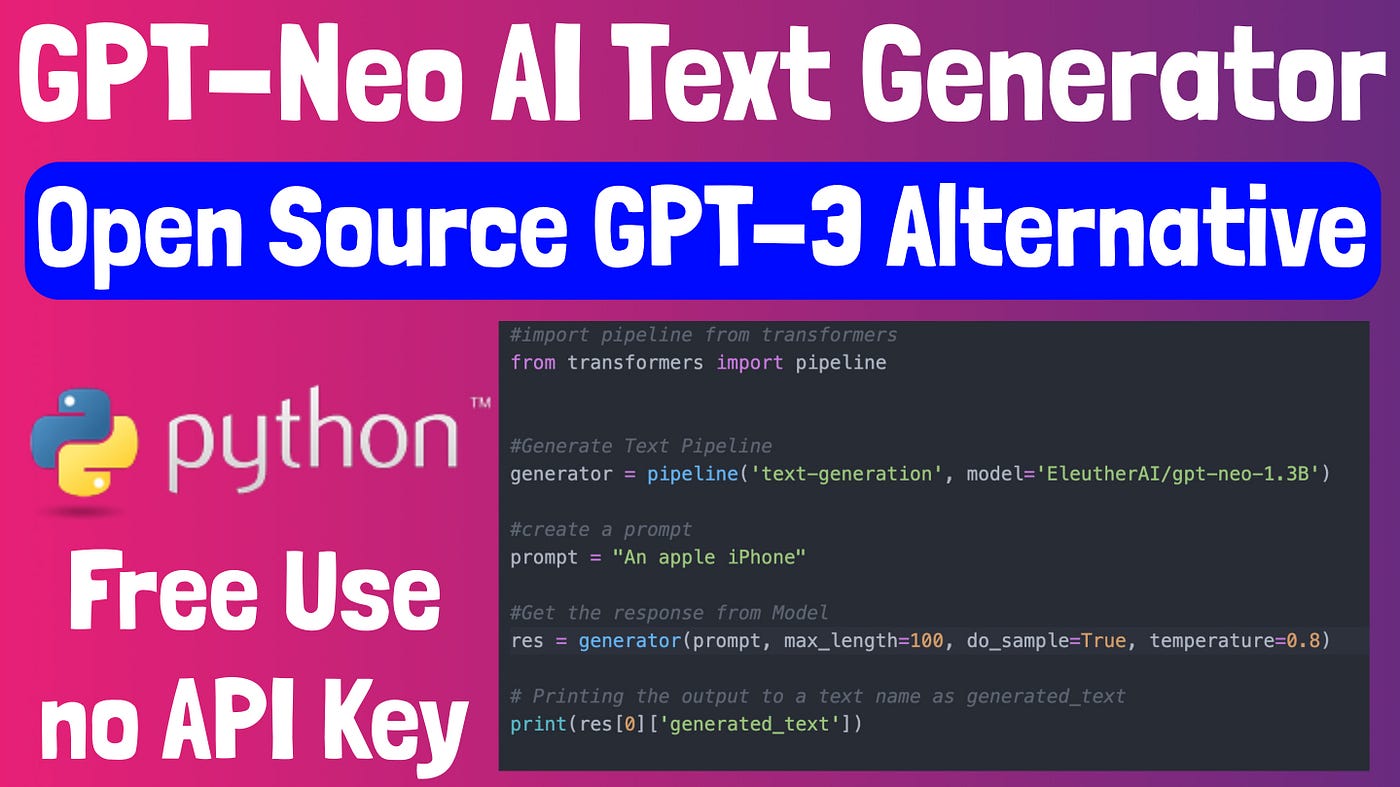

We will talk about GTP-Neo, an OpenSource AI Content Generator alternative to GPT-3. This will be accessed using HuggingFace Transformers and Python.

GPT — in full, Generative Pre-trained transformers. This model uses an Internet data set to train a neural network to generate text based on inputs. OpenAI developed GPT, which can be used via API with an API key. The service is not free, and there are stringent limitations on what you can do with the API keys once you get them.

GPT-Neo, invented by EleutherAI and accessible through the transformers library. This is an alternative AI Engine to GPT-2/3. We’ll go through how to get going with GPT-Neo for text generation in this tutorial.

A written step-by-step guide that you can find here: https://skhokho.io/notes/python-gpt-neo-setup-and-use-66a7b595170d/view

Online YouTube Video:

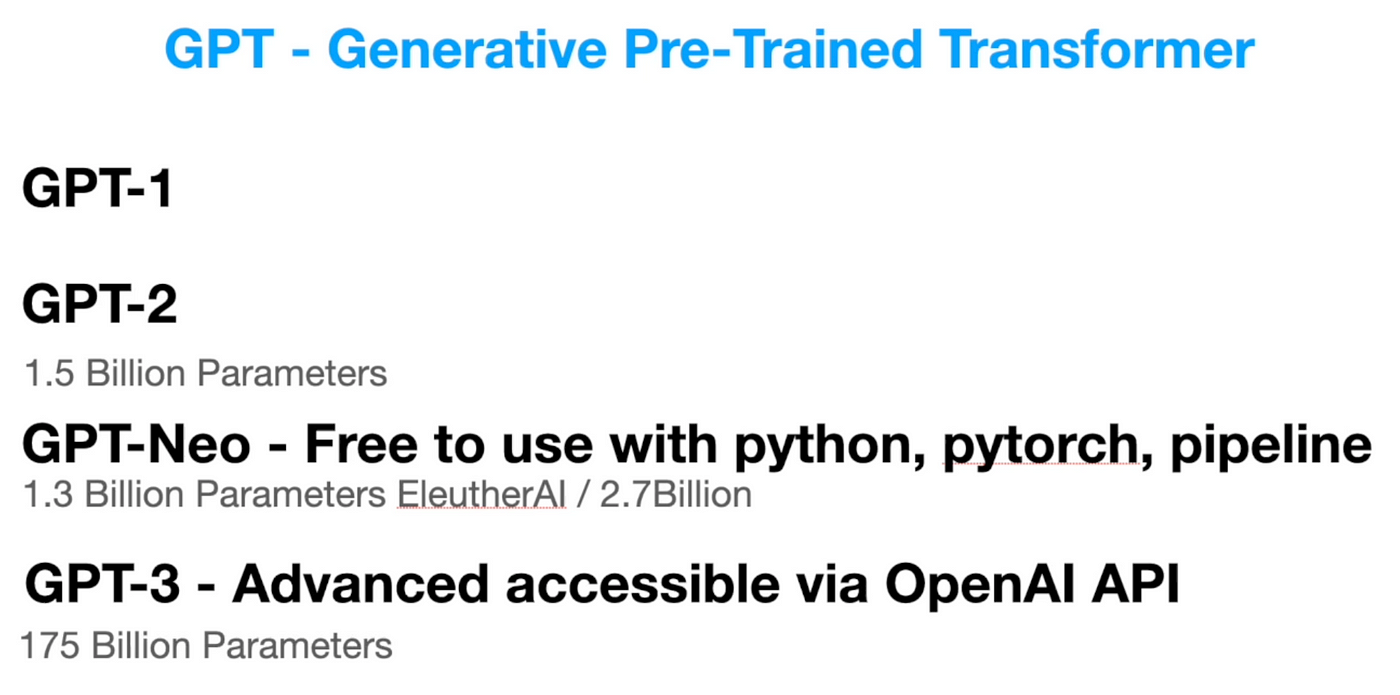

The difference between GPT-Neo and GPT-2 and GPT-3

In a nutshell, GPT-Neo is a GPT-2 clone that performs better than OpenAI’s second-generation GPT-2 engines (see table below).

GPT-Neo is available in two versions from EleutherAI: (1) 1.3 billion parameters and (2) 2.7 billion parameters. The parameters indicate the model’s size; a larger model is more effective, but it takes up more space and generates text more slowly, whereas a smaller model is quicker but much less competent.

GPT-2 had parameters in the same range as the 1.3 billion GPT-Neo, while GPT-3 has 175 billion.

Furthermore, OpenAI has now produced and made standard the “instruct” series, which takes text generation to another step by allowing you to “give an instruction” rather than asking the text generator with a statement that it must complete.

In conclusion, GPT-3 is more robust and has an instructional sequence, which offers a great means of engaging with AI models. Nonetheless, GPT-Neo could still be used in some situations; the advantages of adopting open source pre-trained models include: (1) no waiting times, (2) no API keys required, (3) no permissions or app reviews required.

GPT-Neo Text Generating Models Implementation

The GPT-Neo models can be retrieved in one of two ways. You have two options: (1) download the models and run them on your own server, or (2) use HuggingFace’s API (minimum cost is $9 per month). The rapidity with which you can retrieve text created from the model is one of the advantages of using the API, and it’s arguably the best option if you’re developing a live application.

In this article, we’ll go through the self-hosted method, which consist of the following:

- Set-up a Virtual Server with Digital Ocean

- Install Pytorch

- Install Transformers

- Run the code to generate text

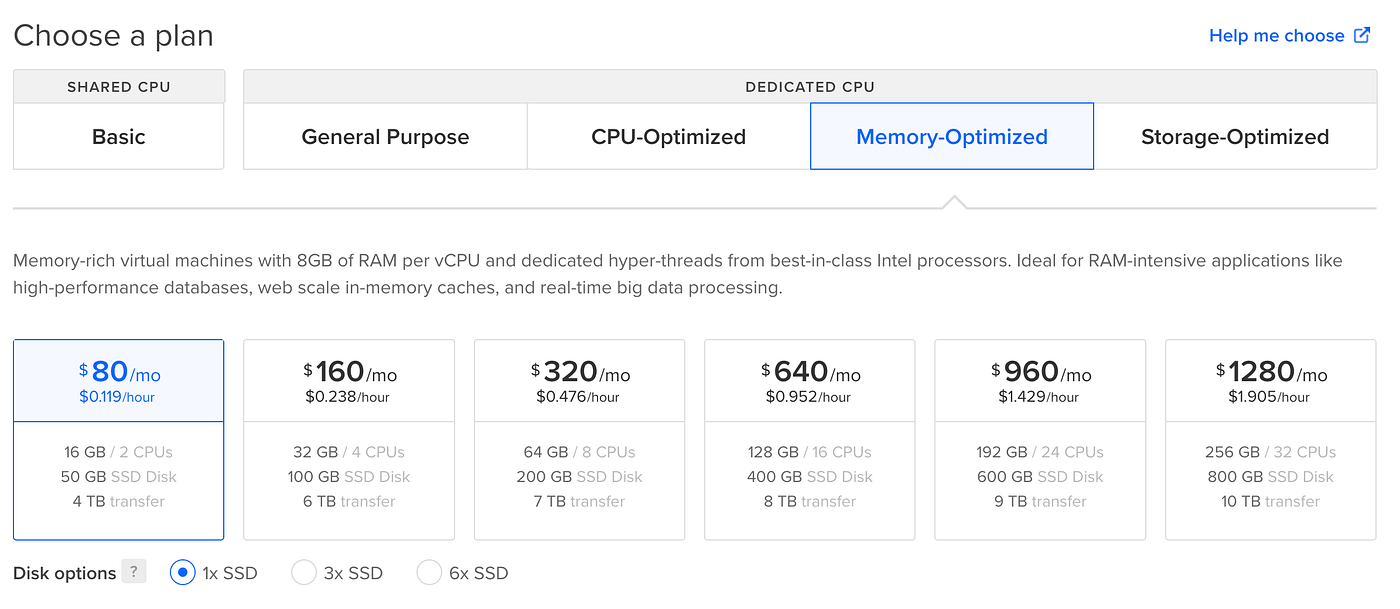

Set up Virtual Server with Digital Ocean

A YouTube video instruction walks you through the stages in greater depth. When buying, it is critical to examine the size of the droplet.

EleutherAI models are extremely large and then take up a lot of space and memory. As a result of our testing, we were able to run the 1.6Billion model with 16GB/2CPU and 50GB SSD. We did not test the 2.7Billion model, although technically it should be able to run it.

You are going to run the models on your own computer after downloading the models. You should have enough space and memory to handle them. This is what you lose when you avoid the API. From a financial standpoint, we cannot afford to pay $80 a month to run our own model when OpenAI, with a superior engine, is considerably less expensive.

There may still be compelling reasons for you to run your own model, such as skipping the permitting process or bypassing a use case that OpenAI may or may not authorise.

The remaining stages can be found in the Video Tutorial.

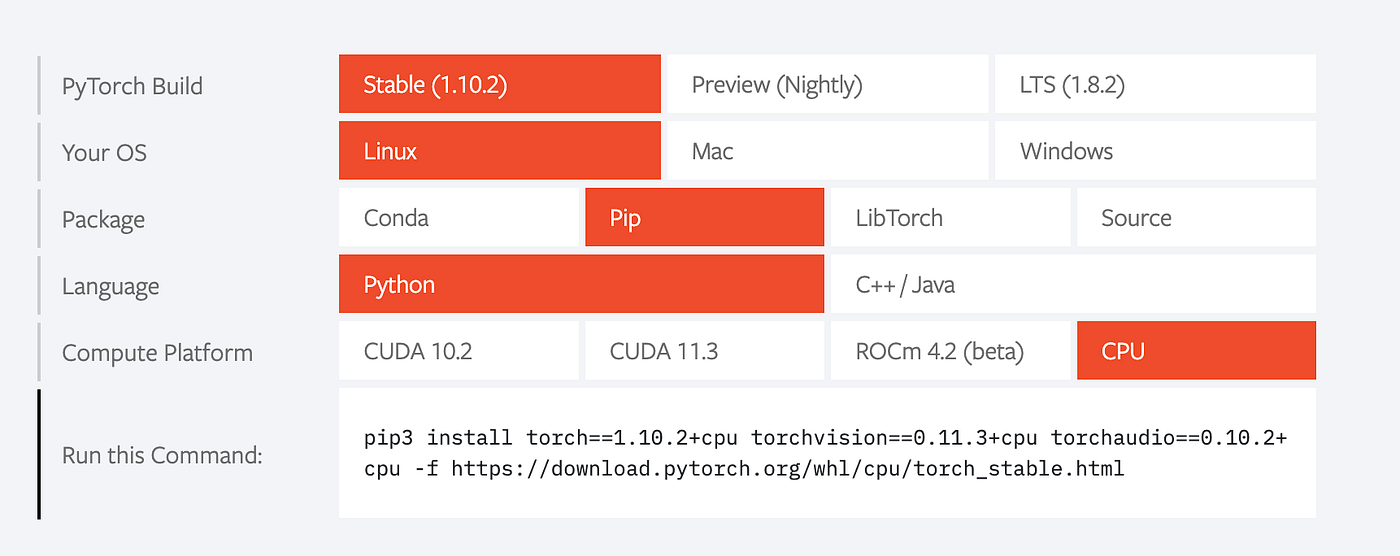

Install Pytorch: OpenSource AI Content Generator

Visit their website here: https://pytorch.org/

If you’re utilising a Droplet from Digital Ocean, you’ll have to build up the following OS requirements:

Then copy and paste the instructions:

pip install torch==1.10.2+cpu torchvision==0.11.3+cpu torchaudio==0.10.2+cpu -f https://download.pytorch.org/whl/cpu/torch_stable.html

If you’re using a virtual environment, as demonstrated in the lesson, As stated in the guidelines, you must use pip rather than pip3.

Install transformers and run the code: OpenSource AI Content Generator

pip install transformers

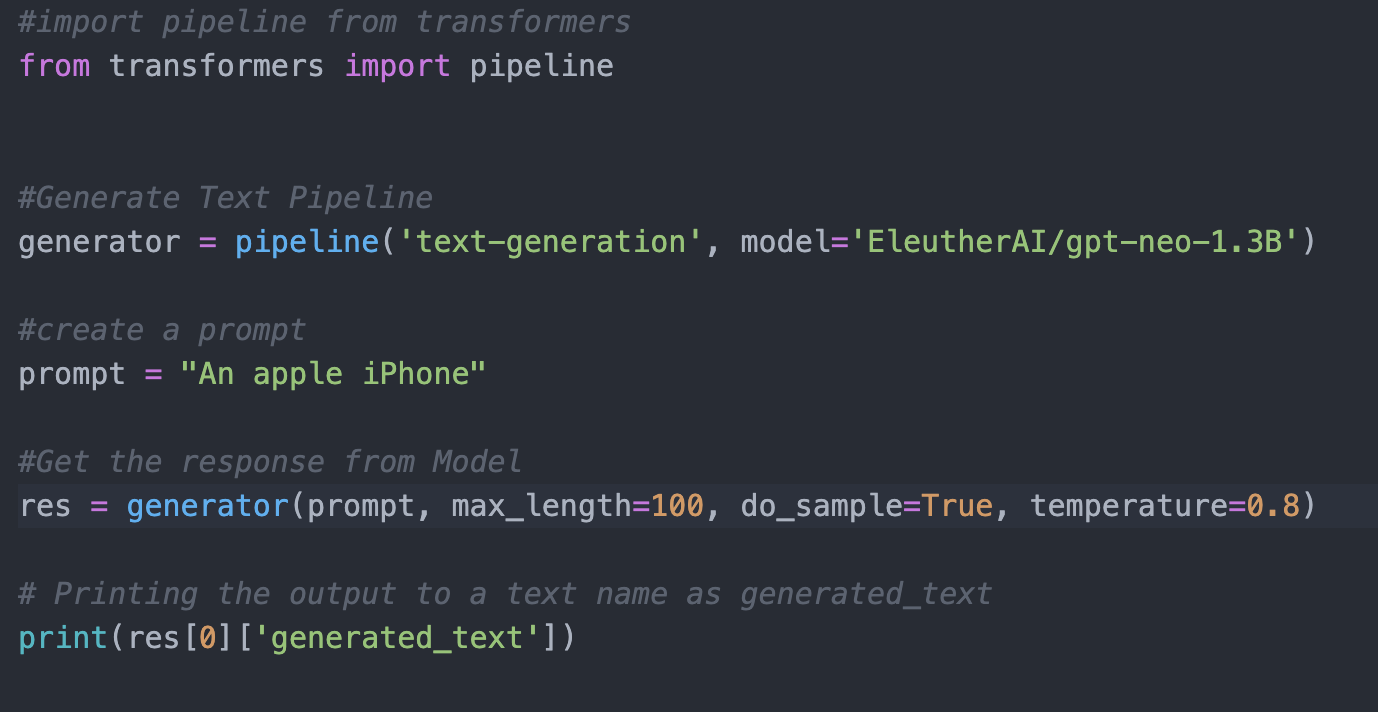

The rest of the code is as follows:

This code may appear to be easy, but there is a lot going on, and it will not run if the operating system lacks sufficient memory and you have not completed all of the pre-requisite tasks listed earlier in this section.

You might get this response:

Killed

This result indicates that your machine does not have enough RAM to perform the model. You have the option of upgrading your machine or operating a smaller variant. “distilgpt2” is an alternate model that does not demand a lot of memory. Of course, it is much smaller and less efficient.

Replace the generator line with this in the code to use a different model:

generator = pipeline('text-generation', model='distilgpt2')

You may now create text with Python for free.

More Open AI tutorial here.

Leave a Reply